The Language Interpretability Tool: Extensible, Interactive Visualizations and Analysis for NLP Models

Ian Tenney,

James Wexler,

Jasmijn Bastings,

Tolga Bolukbasi,

Andy Coenen,

Sebastian Gehrmann,

Ellen Jiang,

Mahima Pushkarna,

Carey Radebaugh,

Emily Reif,

and Ann Yuan

In Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing: System Demonstrations,

2020

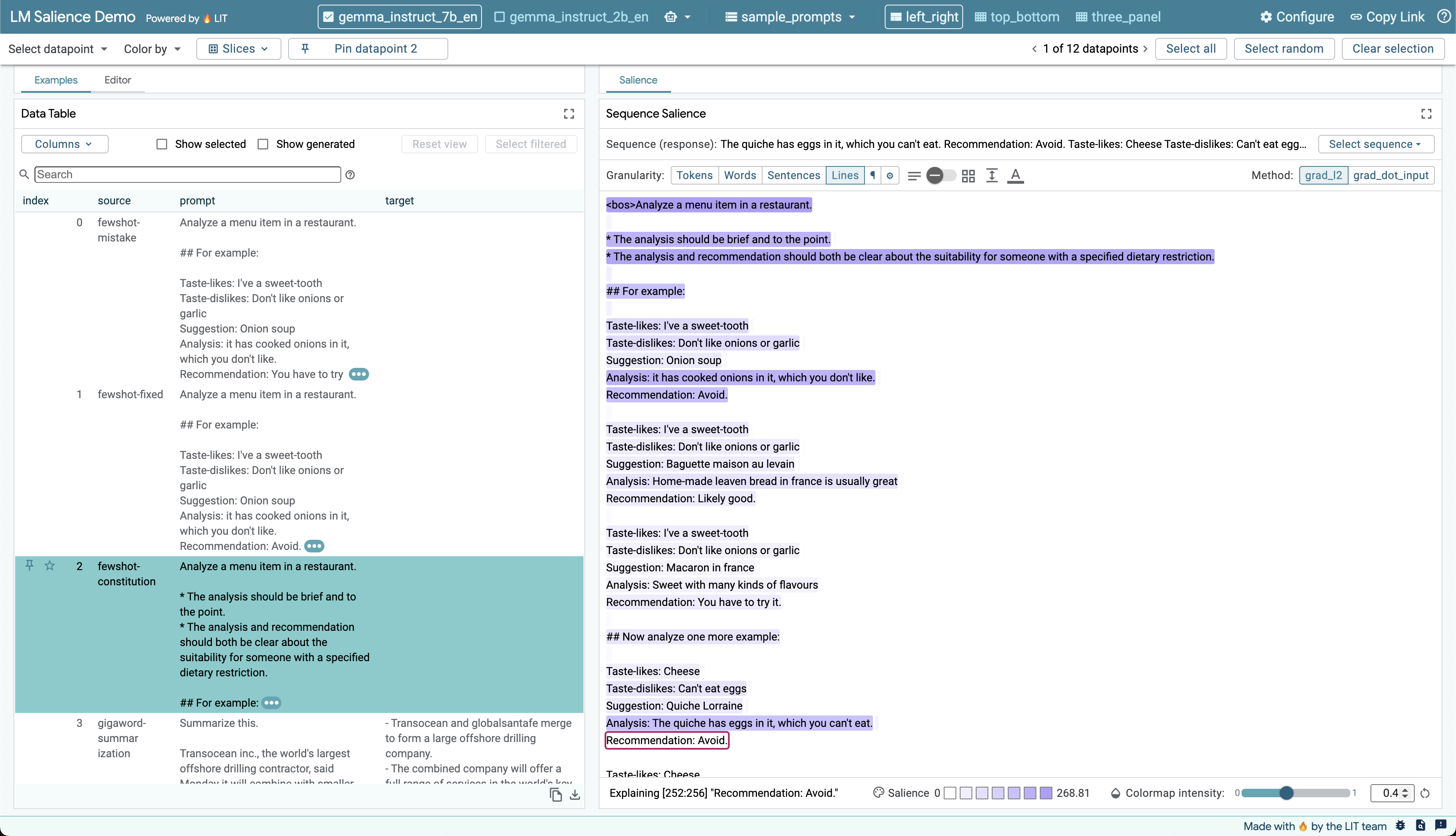

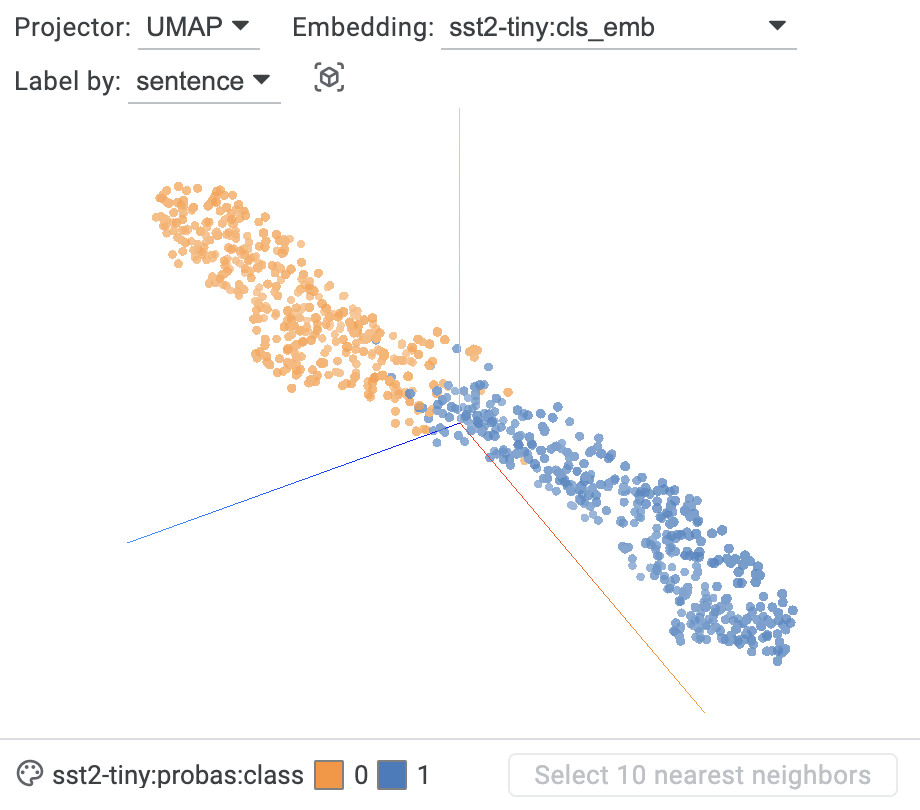

We present the Language Interpretability Tool (LIT), an open-source platform for visualization and understanding of NLP models. We focus on core questions about model behavior: Why did my model make this prediction? When does it perform poorly? What happens under a controlled change in the input? LIT integrates local explanations, aggregate analysis, and counterfactual generation into a streamlined, browser-based interface to enable rapid exploration and error analysis. We include case studies for a diverse set of workflows, including exploring counterfactuals for sentiment analysis, measuring gender bias in coreference systems, and exploring local behavior in text generation. LIT supports a wide range of models—including classification, seq2seq, and structured prediction—and is highly extensible through a declarative, framework-agnostic API. LIT is under active development, with code and full documentation available at https://github.com/pair-code/lit.

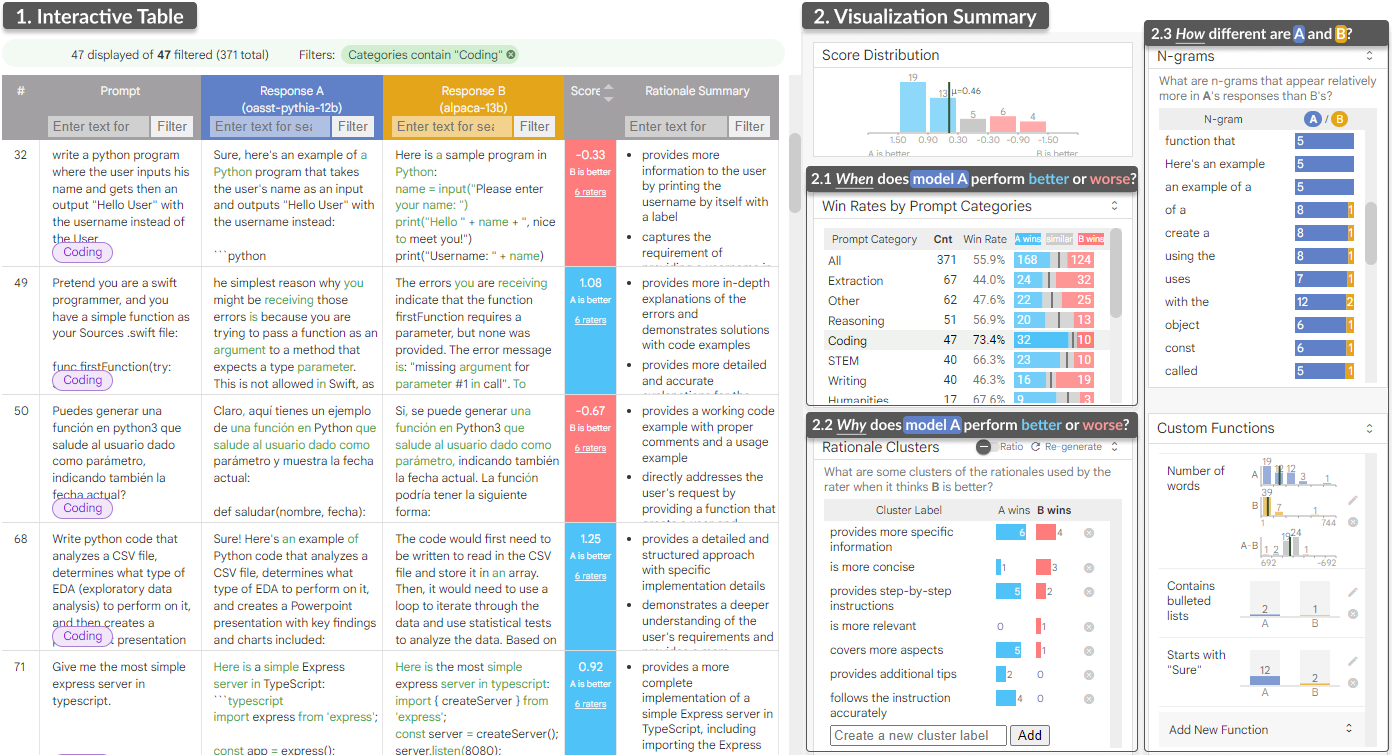

LLM Comparator: Visual Analytics for Side-by-Side Evaluation of Large Language ModelsIEEE Transactions on Visualization and Computer Graphics, 2024

LLM Comparator: Visual Analytics for Side-by-Side Evaluation of Large Language ModelsIEEE Transactions on Visualization and Computer Graphics, 2024 The Language Interpretability Tool: Extensible, Interactive Visualizations and Analysis for NLP ModelsIn Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing: System Demonstrations, 2020

The Language Interpretability Tool: Extensible, Interactive Visualizations and Analysis for NLP ModelsIn Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing: System Demonstrations, 2020